As a software developer, it’s essential to continue learning throughout your career. Technology evolves rapidly, and the rise of AI has turned the tech world upside down. That’s why Kelly Hellinx from Teal Partners enrolled in the Master of Artificial Intelligence program at KU Leuven. His thesis focuses on the use of Large Language Models in customer support.

Kelly Hellinx: “In a list of possible topics from my supervisor, a question from DNS Belgium caught my attention. That company manages the domain names .be, .brussels, and .vlaanderen. They wanted to investigate how AI and Large Language Models, or LLMs for short, can be applied in customer support. That appealed to me, especially because that knowledge can also be useful at Teal Partners, for example in the payroll software we develop.”

With my AI degree in hand and with the help of an intern, we are now going to specifically investigate at Teal Partners what LLMs can mean for our customers.Kelly Hellinx, developer at Teal Partners

Kelly Hellinx: “At DNS Belgium, we receive many support queries, often on the same topics. The company wanted to know if a language model can automatically answer certain questions, or formulate a proposal for an answer. I created a proof of concept based on their anonymized data. They provided me with thousands of support queries and corresponding answers from the past two years. Due to privacy concerns, they did not want to use ChatGPT, but with an open-source language model that handles the data confidentially.”

Kelly Hellinx: “A language model works with words. It assigns a number to a word. Through parameters, related words are linked to each other based on the probability that they occur together. You can refine an existing language model, in other words, adjust it to your own knowledge. Based on thousands of real support queries from DNS Belgium and their corresponding answers, I trained a model. Without the right knowledge, a model hallucinates. It generates an answer to your question, but it usually doesn’t match. In customer support, every answer must be 100% correct.”

“But training a model requires a lot of money. It requires enormous computer power and super powerful hardware. Moreover: if the information changes, or if new questions come in, or if there’s a new version of the language model, then you have to retrain. That’s not feasible in practice.”

Kelly Hellinx: “With a technique called RAG, Retrieval-Augmented Generation. It combines generating text with retrieving information. You first let the system search for relevant information in specific sources, and then you send it together with the question to the language model. The model uses that information as context to generate a text. It’s not just based on the original dataset it was trained on, but it formulates better answers thanks to those specific information sources.”

“The challenge is to search correctly. Typically, this is done with keyword-based search, for example on the word ‘domain name’. If this word appears often in the text, it’s likely a relevant source.But even if a sentence strongly resembles another sentence, they can still have completely different meanings. The same words can have multiple meanings, such as ‘bank’. Or by adding the word ‘not’, a sentence gets a completely different meaning. That’s why there’s a second search technique, called semantic search, based on text fragments. You convert the meaning of a text fragment into numbers. If the meaning matches the information in your knowledge domain, then that source is likely relevant. You feed it to your language model.”

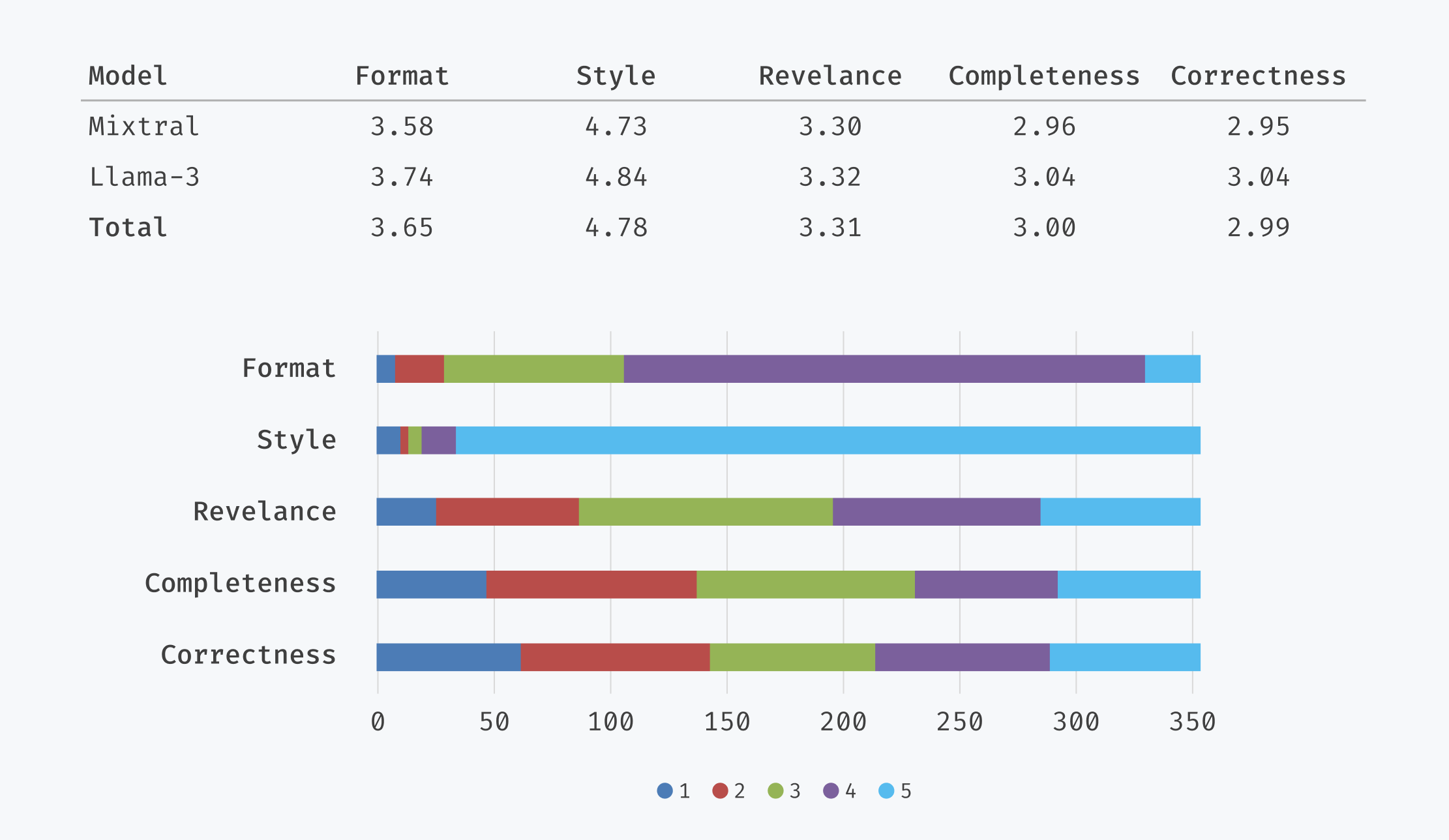

Kelly Hellinx: “My research shows that LLMs can help with customer support. But the model I built is not ready to be plugged in. It needs to be further refined. Six hundred answers from the language model were evaluated by a customer support manager at DNS Belgium. He evaluated the format, style, relevance, completeness, and correctness of the answer on a scale of 1 to 5. Format and style were fine, but the relevance of the answers needs to improve by training the model further.”

Kelly Hellinx: “AI is at the peak of its popularity. Billions of dollars are being invested in it. Many companies want to do ‘something with AI’, but what? They’re groping in the dark. It’s not obvious to find a meaningful application. What makes work more efficient? How can you help customers with AI? How can you monetize it commercially? After the hype, the disillusionment will soon follow. You need the right know-how and the investment is high. How can you control the accuracy of your results for customers? What are the ethical implications? There are still many questions to answer. Gartner did interesting research on the hype around AI.”

Kelly Hellinx: “With my degree in hand and with the help of an intern, we are going to specifically investigate at Teal Partners what AI and LLMs can mean for our customers. We want to develop a proof of concept to discover what can be integrated in our payroll software. Can AI help us answer common questions from users? That’s the first concrete application we want to further investigate.”

LLMs, RAG, parameters, and models: for those not familiar with the jargon, it often sounds like gibberish when it comes to AI. Who better to explain it to us than ChatGPT itself? Four simple questions, explained by the most popular language model of the moment.

An LLM understands and generates text. It bases its answers on the patterns of text it has learned through machine learning. The model is fed with billions of words and sentences from various texts, allowing it to learn the structure of language. An LLM predicts the text that is most likely to follow, based on what it has learned.

The most well-known models are GPT from OpenAI, but also LLaMa from Meta, and Google’s models Bert, T5, or PaLM. There are also open-source models, such as BLOOM. Many companies and research institutes are now building their own models, which is very expensive.

An LLM contains millions to billions of parameters. A parameter is a weight that determines the strength of the relationship between certain words. The model converts words into numbers that are processed by a neural network, and learns the relationships between the numerical representations of those words. A smaller model has fewer parameters and is therefore less accurate. Training a model costs thousands of euros for a small language model and runs into the millions for the larger models.

The AI system first searches a specific database for relevant information. It uses that information as context to generate a text. This way, the model is not limited to what it learned during its training period, but can also use current or domain-specific information.